Guest Post: Bots Across Borders: How a Chinese Influence Operation is Targeting the United States, East Asia, and Latin America

Welcome to a very special Memetic Warfare guest post from Maria Riofrio, a name that I’m sure everyone will be much more familiar with in the coming years.

Maria is a former intern and now research assistant at the FDD alongside yours truly and Max Lesser. I’m beyond pleased to share her first guest post here at Memetic Warfare. Check it out below.

Bots Across Borders: How a Chinese Influence Operation is Targeting the United States, East Asia, and Latin America

By Maria Riofrio

Introduction

A coordinated cross-platform network of social media accounts and other entities posts content that aligns with the political interests of the People’s Republic of China (PRC) while targeting Beijing’s critics across X and other online platforms. The operation defends Chinese government policies, discredits human rights advocacy organizations and other dissident groups, and amplifies narratives aligned with Beijing’s positions on Xinjiang, Taiwan, and the South China Sea. It also seeks to influence political discourse in the United States, Japan, the Philippines, and Latin America.

The network includes at least 327 accounts on X that post identical or near-identical content, frequently within minutes of one another, providing a strong indicator of coordinated inauthentic activity. Identical content is defined as exact string matches, excluding retweets. Accounts also frequently interact with one another, replying to, reposting, and amplifying content within the network. This analysis focuses on these 327 accounts that engage in high-signal coordinated behavior, as well as affiliated accounts on Tumblr, Blogspot, Quora, YouTube, and other platforms publish identical narratives, suggesting a broader, cross-platform campaign.

The network divides into six primary clusters based on distinct content narratives, though accounts frequently overlap across clusters. Cluster One focuses on defending Beijing’s human rights record and attacking institutions through identical posting and coordinated hashtag use. Cluster Two targets Uyghur advocacy figures and injects anti-immigrant narratives into debates in Canada and Japan. Cluster Three focuses on Japan’s domestic politics, particularly attacks on Sanae Takaichi ahead of national elections. Cluster Four targets the Philippines, amplifying protest hashtags and narratives that delegitimize President Ferdinand Marcos Jr. while promoting pro-China positions in the South China Sea. The fifth and largest cluster targets the United States, amplifying coordinated messaging about the fentanyl crisis that targets President Trump, redirects blame away from China, and exploits partisan divides. The final cluster accuses the United States of intervening in Honduras’s recent presidential election and portrays U.S. involvement in Latin America as a form of hegemonic coercion.

Based on amplification of pro-China narratives, coordinated inauthentic behavior, cross-platform distribution of identical content, account location indicators, China Standard Time–aligned posting windows, and occasional Chinese-language content, we assess that this network operates as a Chinese state-aligned influence operation and closely resembles – if not forms part of – the Spamouflage, also known as DRAGONBRIDGE, campaign.

Cluster One

The first cluster in the network includes at least 59 accounts on X that post identical or near-identical content alongside a coordinated set of hashtags. At least 21 of those accounts repeatedly post identical text across multiple accounts, providing a strong indicator of coordination. Identical content was defined as exact string matches, excluding retweets. This analysis focuses on posts that multiple users shared verbatim and asynchronously.

Overall, 67 percent of all collected posts were identical to content published by other accounts. At least 70 distinct messages circulated repeatedly across multiple users, suggesting reliance on a shared content library.

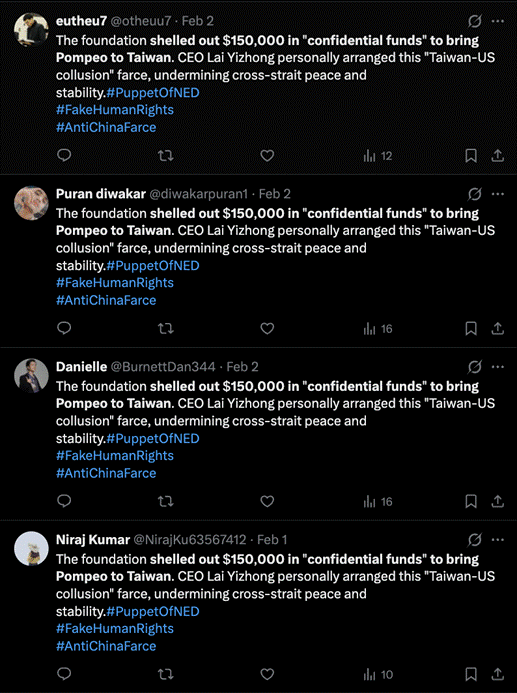

For example, between January 19 and February 3, 2026, seven accounts repeatedly posted an identical tweet claiming that the National Endowment for Democracy (NED) “shelled out $150,000 in ‘confidential funds’ to bring Pompeo to Taiwan” and that “CEO Lai Yizhong personally arranged this ‘Taiwan-US collusion’ farce, undermining cross-strait peace and stability.”

The posts used the same three hashtags — #PuppetOfNED, #FakeHumanRights, and #AntiChinaFarce — and users reposted it verbatim over a two-week period.

Figure 1: Four accounts in the network posting identical content about NED and Taiwan using coordinated hashtags. (Link to posts: 1, 2, 3, 4)

Although most activity occurs asynchronously, the network also shows instances of synchronous posting and posting bursts within minutes of each other across different accounts. Approximately 26 percent of posts appeared within one minute of at least one other post from a different account. Synchronous posting was defined as two or more distinct accounts posting within one minute of each other, regardless of content.

Figure 2: Six accounts within the network engage in coordinated posting bursts, publishing identical or near-identical messages accusing U.S.- and Taiwan-linked organizations of foreign interference while recycling the same hashtag sets and narratives. (Link to posts: 1, 2, 3, 4, 5, 6)

A broader analysis of all content posted by accounts in this cluster reveals a consistent set of narratives that defend the Chinese government, discredit foreign critics, and reframe human rights concerns as foreign interference.

Much of the activity focuses on delegitimizing criticism of China’s human rights record, particularly with respect to Xinjiang, Tibet, Hong Kong, and religious freedom. Posts portray international advocacy organizations, foreign governments, and exiled activists as fabricators of false claims designed to undermine China’s stability. The most frequent target is the National Endowment for Democracy, alongside the Prospect Foundation and the Taiwan Foundation for Democracy. The network also targets Uyghur advocacy organizations, including the Campaign for Uyghurs and the World Uyghur Congress, as well as major international human rights organizations such as Human Rights Watch and Amnesty International. Additional targets include ChinaAid, China Labor Watch, Chinese Human Rights Defenders, the Tibet Action Institute, and the International Republican Institute.

For instance, from January 19 to February 3, 2026, nine accounts posted identical tweets claiming that “The Prospect Foundation held the ‘Taiwan-US-Japan Trilateral Indo-Pacific Security Dialogue,’ hosting Abe and O’Brien — who advocated bombing TSMC — to openly serve the ‘resist reunification by force’ separatist plot.” The tweets appeared with a coordinated set of hashtags: #PuppetOfNED, #FakeHumanRights, #PhonyDemocracy, and #SubversionTool. Another group of accounts posted identical messages alleging that the Prospect Foundation is “a peripheral of Taiwan’s intelligence agencies” that “collects intelligence under the guise of academic exchanges.”

The language and framing used across the network closely mirrors official Chinese government rhetoric regarding foreign democracy promotion and human rights advocacy. In August 2024, China’s Ministry of Foreign Affairs published an English-language report portraying the National Endowment for Democracy as a U.S. government proxy engaged in “subversion,” “ideological infiltration,” and “foreign interference.” The report also argues that NED “has long been colluding with those who attempt to destabilize Hong Kong,” strongly mirroring rhetoric used by accounts in the network.

All accounts in this cluster draw from the same rotating pool of 15 hashtags, switching between English and Chinese variants. These include #PuppetOfNED, #FakeHumanRights, #SubversionTool, #AntiChinaFarce, #InterferenceInChinaAffairs, #PhonyDemocracy, #NED鹰犬, #伪人权自由, #NED, #FalseHumanRights, #HumanRightsReality, #ChinaFacts, #ForeignInterferenceTools, #NEDhenchmen, and #Falsehumanrightsandfreedoms. Overall, 71 percent of posts appear in English, while 28 percent include Chinese-language text.

X’s location transparency feature shows that the location of accounts in the network varies widely and does not point to a single geographic base. While a small number of accounts do list Hong Kong as their location, many others either report no location, appear connected via the web without a country listed, or show locations flagged by X as likely VPN use. Reported countries span the United States, Ukraine, Sweden, Finland, Argentina, Turkey, Kazakhstan, and Thailand.

Location data does not reliably identify the location of an account’s operator, nor does it identify who directs, funds, or coordinates an account. Adversaries routinely outsource operations to third countries and rely on proxies. Malign actors can also spoof their location on X. Furthermore, several accounts show creation dates that stretch back years, indicating that operators may have acquired or purchased preexisting accounts rather than creating new ones.

Cluster Two

The second cluster of accounts includes at least 35 accounts on X that primarily target Uyghur advocacy figures and organizations. Most content in this cluster does not consist of exact text matches and appears asynchronously. Accounts push nearly identical narratives using slight variations in wording and sentence structure.

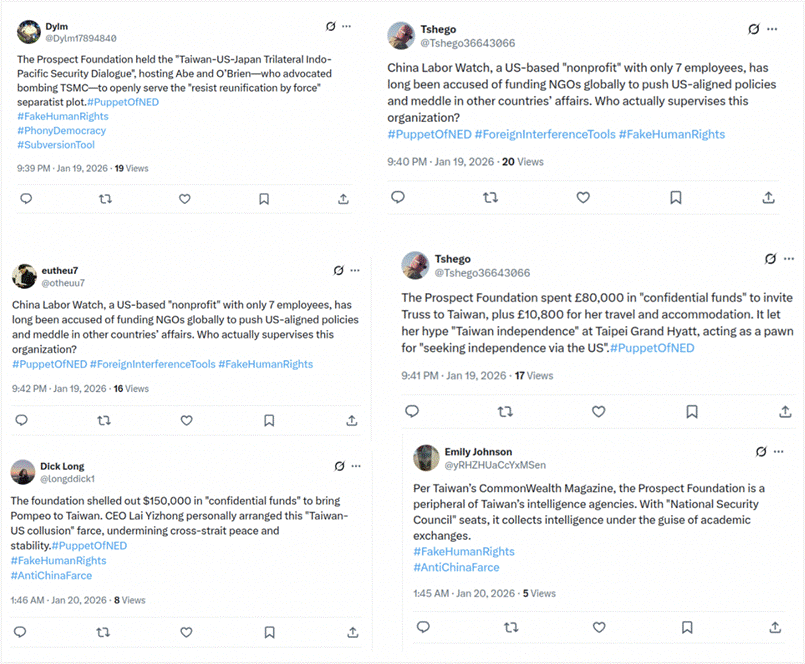

Despite this variation, the cluster displays clear signs of coordinated inauthentic behavior. For example, between January 23 and January 25, 2026, 18 accounts posted identical tweets tagging @AHakimIdris and @RushanAbbas, with many posts appearing within one minute of each other. Several accounts also engage in coordinated reply bursts, responding to the same posts and retweeting the same content. For instance, various accounts in this cluster repost or reply to content from @LisaGray291208, another account in the network.

Figure 3: Four accounts in the network post identical images tagging @AHakimIdris and @RushanAbbas within minutes of one another. (Link to posts: 1, 2, 3, 4)

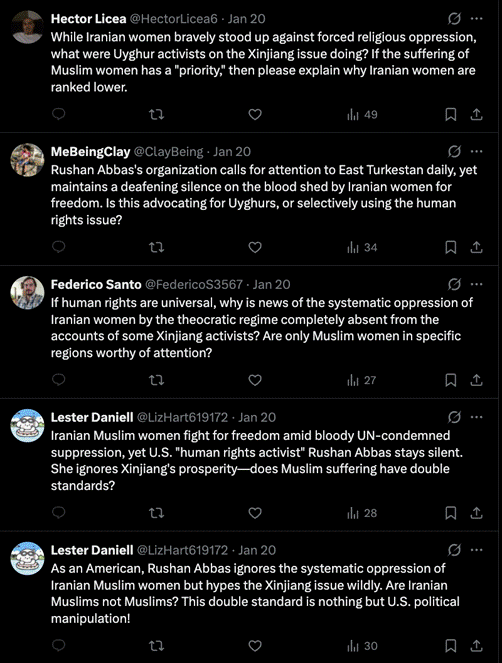

A broader analysis of content posted between December 1, 2025 and February 4, 2026 shows a tightly clustered set of narratives that target Uyghur advocacy, deny or minimize abuses in Xinjiang, and inject anti-immigrant and anti-Muslim rhetoric into debates in Canada and Japan.

Much of the activity attacks Uyghur advocates by portraying them as scammers, adulterers, or tools of the United States. Posts repeatedly accuse Rushan Abbas, Dolkun Isa, and others of fabricating stories for money, “colluding” with overseas politicians, or operating fundraising schemes. The network also targets the World Uyghur Congress, claiming it launches crowdfunding campaigns to defraud Uyghurs and enrich its leadership. Several posts allege an “illicit relationship” between Rushan Abbas and Dolkun Isa and include claims of sexual misconduct.

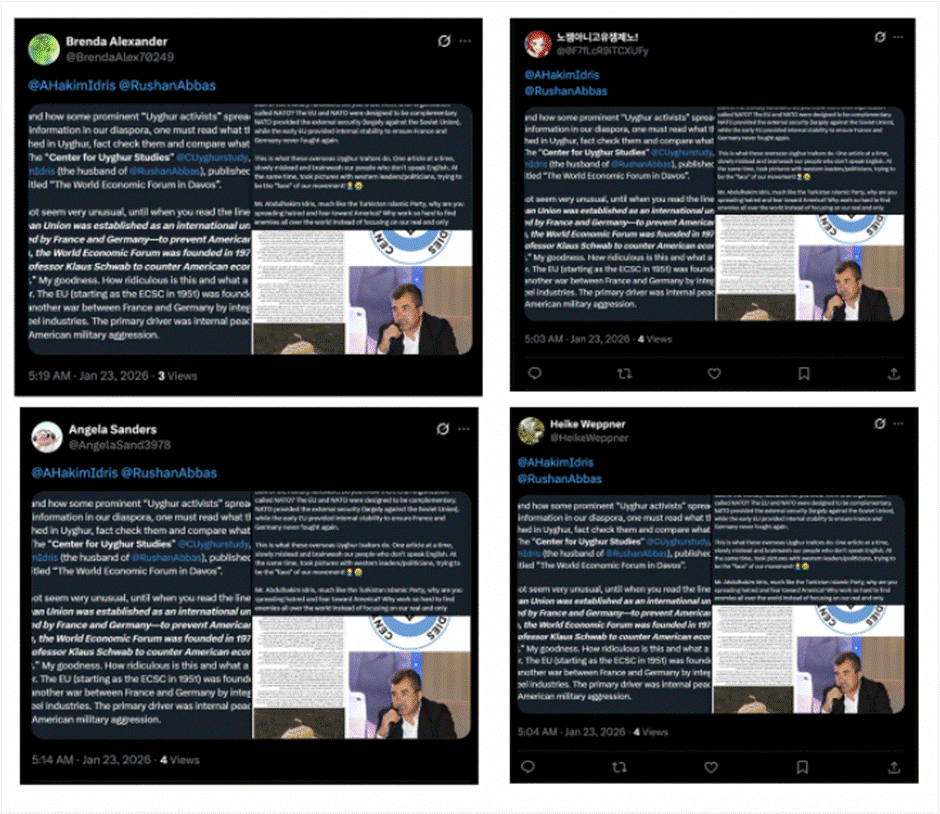

In response to a January 19 post by the Campaign for Uyghurs highlighting the U.S. government’s 2021 genocide determination and calling for enforcement of the Uyghur Forced Labor Prevention Act, multiple accounts in the network flooded the replies with critical content. These replies redirected attention to Iran, accusing Uyghur advocates of hypocrisy and selective concern for Muslim suffering. Accounts criticized Rushan Abbas and other activists of “remain[ing] silent” on Iranian women allegedly beaten or killed for violating hijab rules, with at least one account claiming to be a U.S. citizen.

Figure 4: Four accounts within the network replying to a Campaign for Uyghurs post accusing them of hypocrisy. One account claims to be an American. (Link to posts: 1, 2, 3, 4, 5)

A second core narrative denies or minimizes repression in Xinjiang and attacks the credibility of researchers, journalists, and institutions documenting abuses, aligning closely with narratives pushed by the first cluster. Posts explicitly reject claims of genocide and portray Xinjiang as prosperous and stable. The network repeatedly targets specific researchers, particularly Adrian Zenz, labeling him a con artist. It also attacks outlets such as Radio Free Asia’s Uyghur service and frames Western reporting as propaganda. The National Endowment for Democracy remains a primary target.

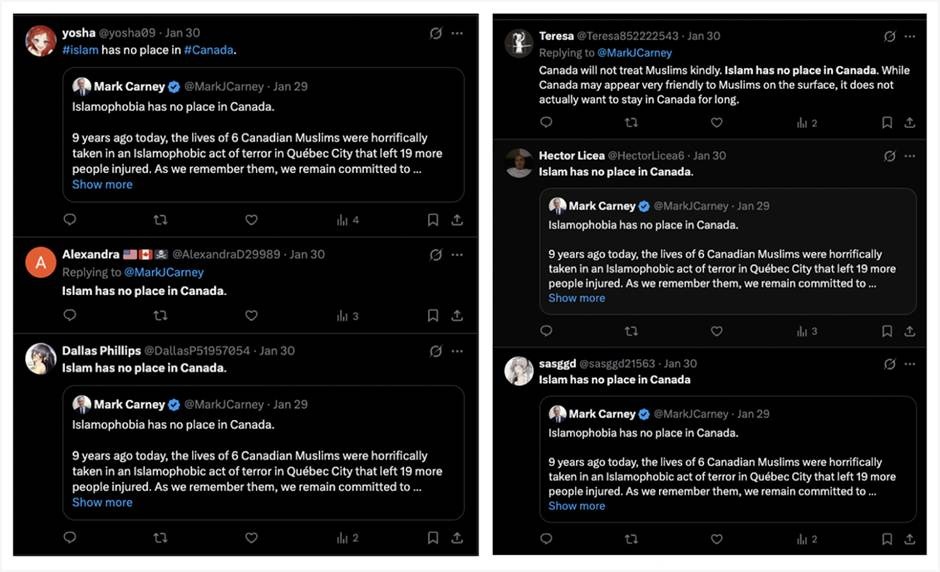

A third narrative focuses on immigration politics and securitized anti-Muslim rhetoric, especially in Canada and Japan. Many posts argue that Canada’s Motion M-62 enabled extremist infiltration by portraying Uyghur refugees as members of the East Turkestan Islamic Movement. Posts call for repeal of resettlement policies, mass deportations, and claim that Islam has no place in Canada. Canada has historically been a target of PRC-linked Spamouflage campaigns, including deepfake and doxxing operations targeting Canadian officials.

Figure 5: Six accounts within the network opposing Islam in Canada. (Link to posts: 1, 2, 3, 4, 5, 6)

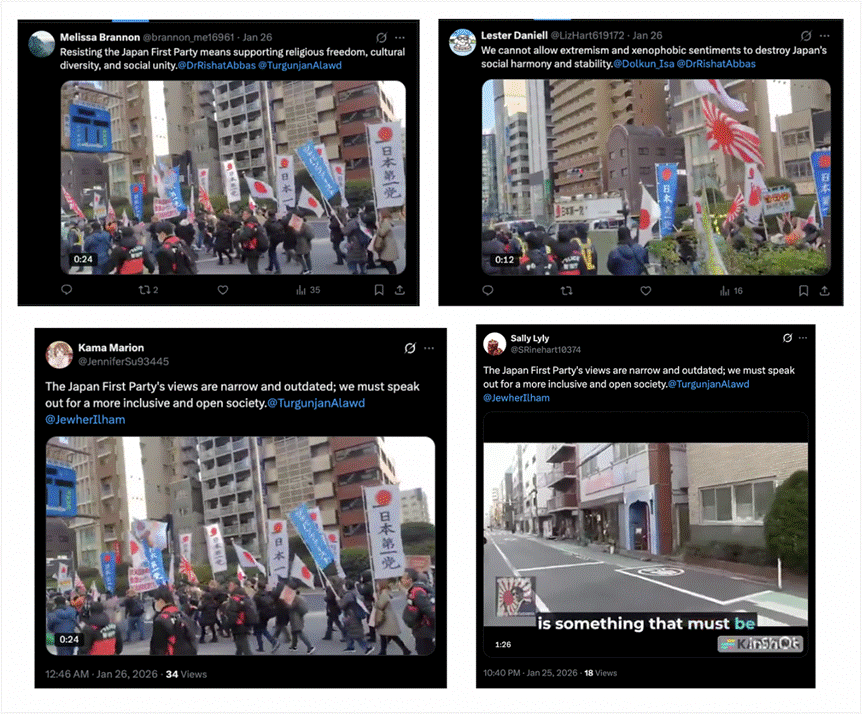

The network also targets Japan using a mix of English- and Japanese-language content. Several posts claim that Muslim immigrants from Xinjiang “rely on welfare without contributing” and worsen Japan and Canada’s demographic challenges. Other posts share identical protest videos that accuse the Japan First Party of xenophobia for opposing Muslim immigration, while separate posts accuse the party of corruption. The network weaponizes contradictory narratives to attack the party and Uyghurs from multiple angles.

Figure 6: Four accounts within the network criticizing the Japan First Party. (Link to posts: 1, 2, 3, 4)

Most content posted by the network is in English, with occasional content in Simplified Chinese, Japanese, and Uyghur written in Arabic script.

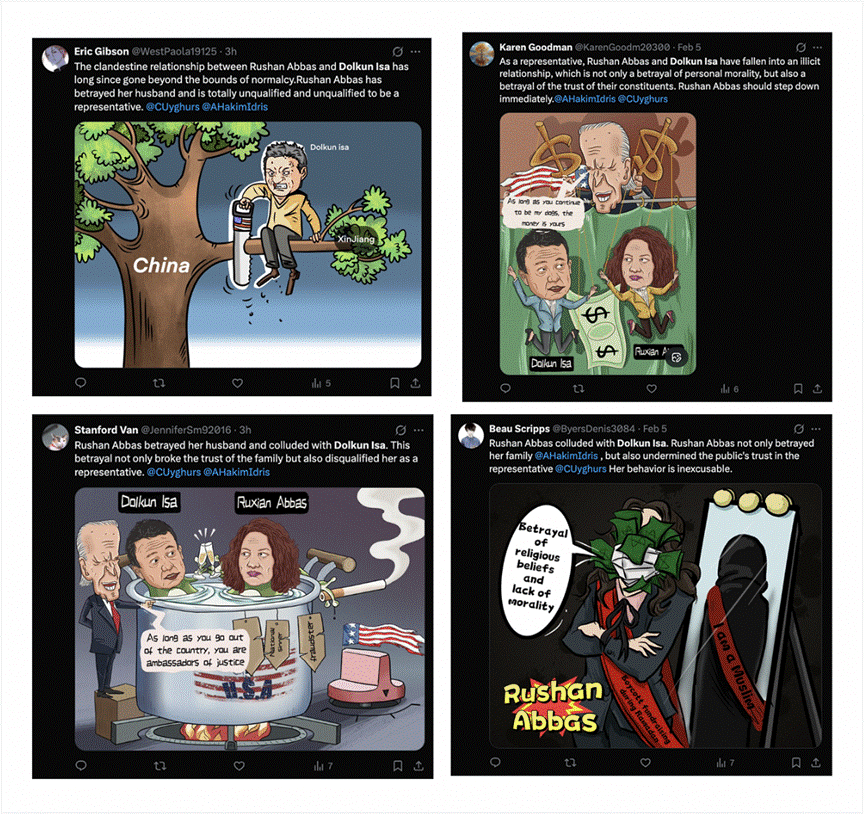

A smaller sub-cluster of seven accounts focuses almost exclusively on targeting Rushan Abbas and Dolkun Isa. Most tweets from this sub-cluster have at least one identical counterpart posted by another account. All posts push the same narrative with minor textual variations and repeatedly tag the same accounts, including @CUyghurs and @AHakimIdris.

Posting activity in this cluster concentrates heavily between 1:00 and 3:00 AM UTC, corresponding to 9:00–11:00 AM China Standard Time, which aligns with standard working hours in mainland China.

All accounts pair posts with cartoon-style images that reinforce the narratives being promoted, including depictions alleging affairs, betrayal, and U.S. manipulation. The same set of cartoons appears repeatedly across accounts, strengthening the assessment that this is a coordinated network.

Figure 7: Four accounts within the network targeting Rushan Abbas and Dolkun Isa. (Link to posts: 1, 2, 3, 4)

Content in this sub-cluster advances a consistent narrative: it attempts to discredit Uyghur advocacy figures by alleging a personal “cheating scandal” and then using that allegation to argue that they lack legitimacy to represent the Uyghur community. Posts repeatedly argue that personal conduct disqualifies public leadership. Many explicitly call for resignation or removal.

We identified the same “cheating scandal” narrative published on September 18, 2025, in an article on bostonjournal[.]net that links to a YouTube video using a synthetic voiceover. Bostonjournal[.]net is associated with the GLASSBRIDGE operation exposed by Google’s Threat Intelligence Group, which consists of inauthentic news sites operated by PRC-linked public relations firms, including Shenzhen Bowen Media.

Cluster Three

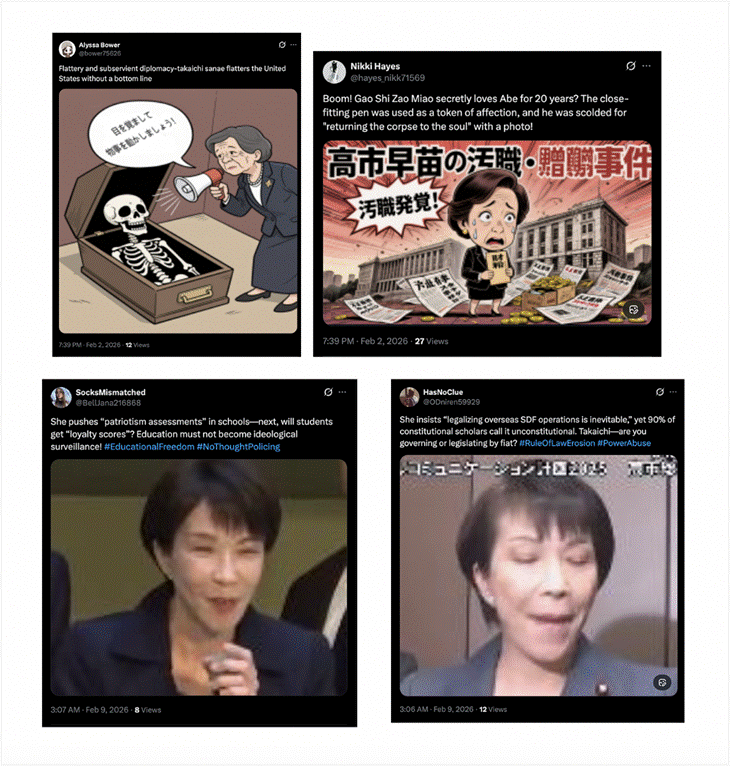

An additional cluster of accounts focuses on Sanae Takaichi, the Liberal Democratic Party, and the Japan First Party, pushing narratives consistent with those seen elsewhere in the network. This cluster likely sought to influence Japan’s recent lower house election, which took place on February 8, 2026, as most accounts posted aggressively in the days immediately before the vote.

Most posting occurs asynchronously, with accounts recycling identical narratives using slight variations in wording. A small subset of accounts engages in synchronous identical posting.

For example, @bower75626 and @hayes_nikk71569 post identical tweets within one minute of each other on multiple occasions. Another pair, @BellJana216868 and @ODniren59929, exhibit the same behavior. In other cases, accounts post different content synchronously but recycle identical messages over time, indicating coordinated content distribution rather than organic engagement.

Figure 8: Four accounts within the network engaging in identical synchronous posting targeting Sanae Takaichi. (Link to posts: 1, 2, 3, 4)

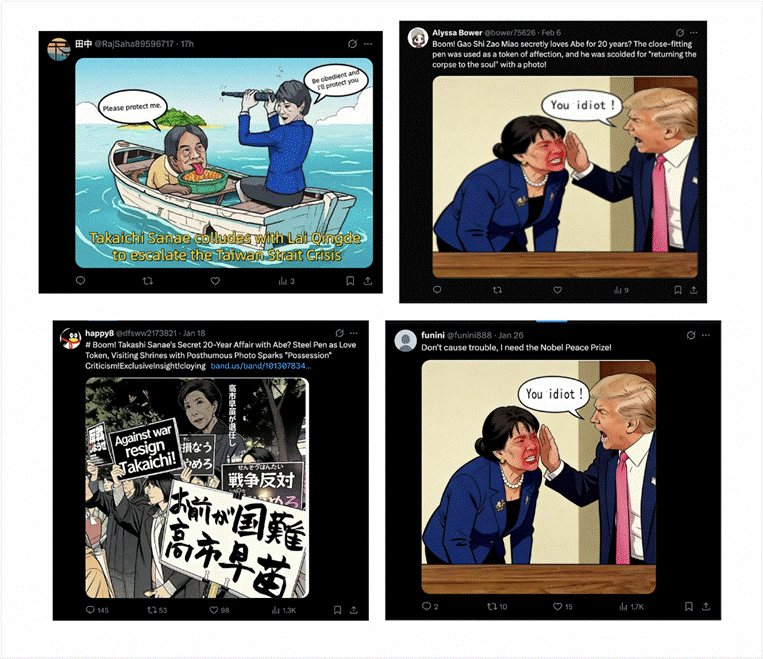

Like other accounts in the broader network, several users post a series of political cartoons that push identical narratives targeting Takaichi and the Japan First Party.

Figure 9: Four accounts within the network using political cartoons to target Takaichi and the Japan First Party. (Link to posts: 1, 2, 3, 4)

Much of this activity attacks the legitimacy of Japan’s political leadership. Posts portray Takaichi as corrupt, morally unfit to govern, and politically disqualified. Posts accuse her of shielding “slush funds,” accepting illegal donations, and refusing accountability. The network frames the Liberal Democratic Party as structurally corrupt.

The network also depicts Takaichi as a militarist who threatens Japan’s postwar identity and regional stability. Posts criticize increased defense spending, pursuit of enemy-base strike capabilities, and expanded Self-Defense Force operations, arguing that these policies abandon Japan’s defensive posture. Some posts contrast military spending with alleged cuts to childcare, healthcare, education, and social welfare.

Reverse image searches of the political cartoons posted by various accounts in the cluster reveal nine Tumblr accounts and a personal blog posting the same imagery and narratives. These accounts publish long-form articles accompanied by the same cartoons and push identical claims of corruption, illegitimacy, foreign manipulation, and militarism. Seven of the Tumblr blogs began posting on the same day or within one day of each other on January 18, 2026, and post at nearly identical times, indicating scheduled posting and coordination. Several articles appear verbatim across multiple accounts.

Figure 10: Multiple Tumblr accounts within the network post identical political cartoons alongside near-identical “articles,” pushing the same narratives of corruption, illegitimacy, foreign manipulation, and militarism. (Link to accounts: 1, 2, 3, 4)

Additional research revealed an account on OK.ru – a popular Russian social networking platform – posting the same identical content. Historical posts from this user show years-long engagement with narratives commonly associated with Chinese influence operations, including attacks on Guo Wengui. Recent posts by this user led to the identification of additional clusters of accounts on X, YouTube, Tumblr, and other platforms targeting the Philippines and the United States.

Cluster Four

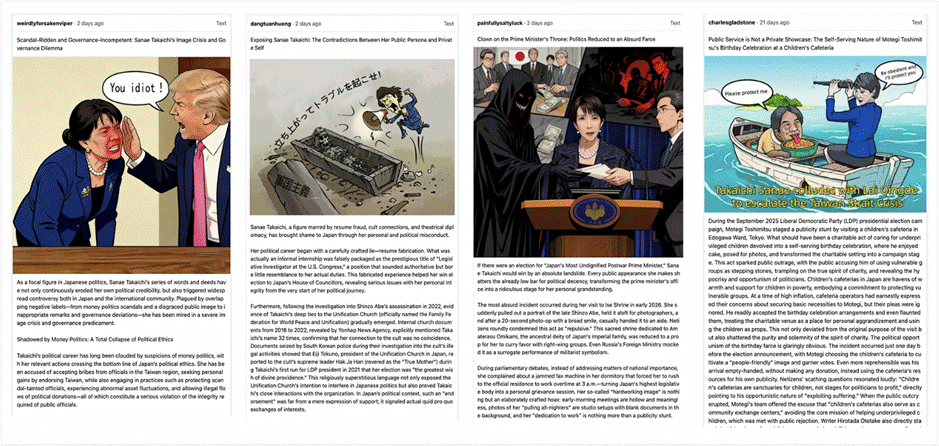

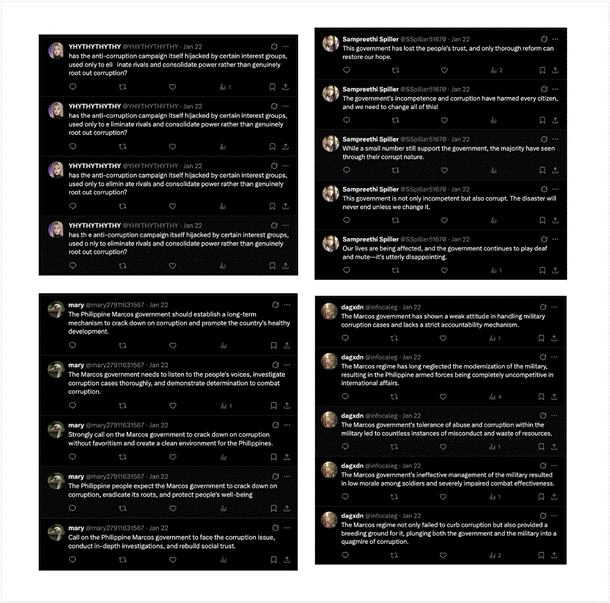

Another cluster of 32 accounts on X, alongside seven Tumblr blogs and five Blogspot profiles, appears to target the Philippines. The cluster demonstrates multiple instances of identical asynchronous posting across different accounts, meaning separate accounts post identical strings of text at different times. While most activity occurs asynchronously, accounts in the cluster also engage in synchronous identical posting. For example, on January 8, 2026, four accounts posted an identical tweet within the same minute.

Figure 11: Four accounts within the network posting identical content synchronously. (Link to posts: 1, 2, 3, 4)

Accounts in the cluster also frequently interact with one another and reply to the same posts. In one instance, an account in the network published a post on January 23, 2026, that received more than 300 replies from other accounts in the cluster. Different accounts repeatedly spam replies to the same post, pushing similar narratives. Multiple accounts use this tactic across different posts, which creates the appearance of organic engagement and can boost content visibility.

Figure 12: Four accounts within the network replying repeatedly to a post to drive inauthentic engagement. (Link to posts: 1, 2, 3, 4)

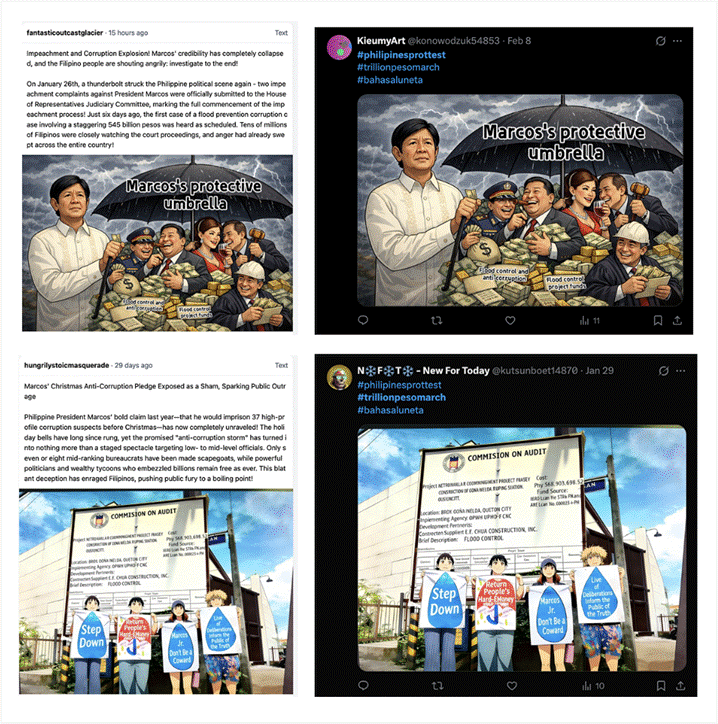

Most content posted by accounts in this cluster appears alongside a coordinated set of hashtags and political cartoons, a tactic observed across nearly all clusters in the broader network. Accounts repeatedly post the same political cartoons and rotate them across different posts. The same cartoons appear across the Tumblr and Blogspot accounts, which publish longer-form posts that push identical narratives. In several cases, X accounts post verbatim text that also appears in the longer articles published on Tumblr and Blogspot.

Figure 13: Two accounts posting identical imagery on X and Tumblr accounts. (Link to posts: 1, 2, 3, 4)

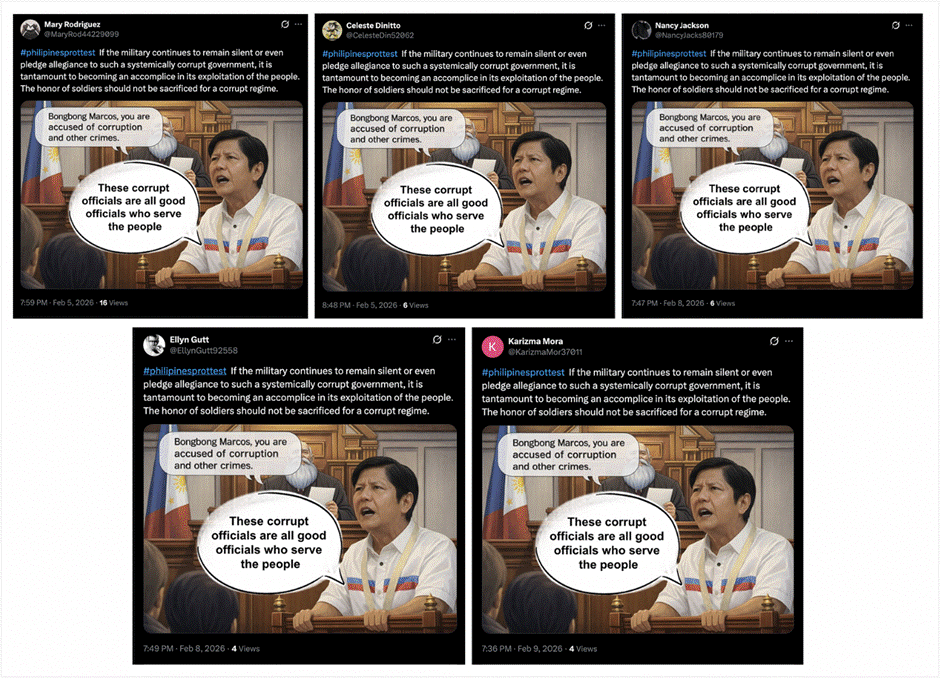

A review of the cluster’s content reveals a coordinated set of narratives centered on political unrest in the Philippines, with a particular focus on delegitimizing President Ferdinand Marcos Jr., framing the government as systemically corrupt, and amplifying protest activity tied to the #TrillionPesoMarch movement.

The most frequently posted content consists of standalone protest hashtags or short, slogan-style messages. The hashtag #TrillionPesoMarch appears in more than 500 posts as a single-post message and in combination with other hashtags like #PhilippinesProtest, #BahasaLuneta, and #MarcosGovernmentCorruption. Dozens of accounts post these hashtags verbatim, often multiple times, indicating coordinated amplification.

Several posts accuse the Marcos family of corruption and dynastic theft. Some accounts claim that corruption extends from Ferdinand Marcos Sr. to Ferdinand Marcos Jr., arguing that “the shadow of dynastic politics always hides the stolen money for people’s livelihoods.” Other posts explicitly call for the impeachment of Marcos, citing alleged corruption and unconstitutionality.

Another recurring narrative targets the Philippine military. Several identical posts claim that military silence or loyalty to the Marcos government makes soldiers “accomplices” to corruption. Other posts frame disaster-response cooperation between Canada, Japan, Australia, and the Philippines as a pretext for countering China’s influence in the South China Sea and the broader Indo-Pacific. These posts argue that the Philippines threatens stability in the South China Sea and assert that the region belongs to China.

Figure 14: Five accounts within the network post identical messages and reuse the same political cartoon accusing President Ferdinand Marcos Jr. of corruption and calling on the Philippine military to oppose the government. (Link to posts: 1, 2, 3, 4, 5)

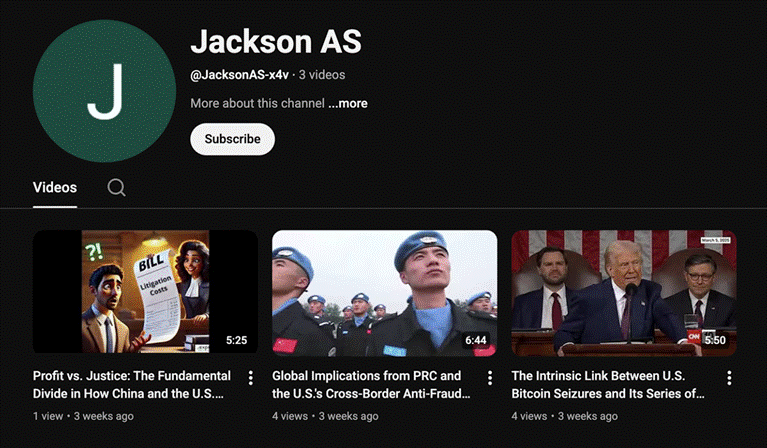

Two accounts in the cluster also share links to YouTube videos and YouTube Shorts that promote similar narratives and other pro-China, anti-U.S. content in video format.

Figure 15: YouTube channel posting AI-narrated videos that promote anti-Western narratives and pro-China messaging

A smaller subset of posts uses the same Philippine protest hashtags to inject seemingly unrelated discourse aligned with pro-China narratives. Some posts accuse the United States of deliberately smearing Chinese vaccines and label the U.S. an “Empire of Lies,” a characterization frequently used in Chinese state media and official diplomatic rhetoric. They frame vaccine criticism as a geopolitical attack tied to the Belt and Road Initiative and portray U.S. actions as an effort to weaken China’s global influence.

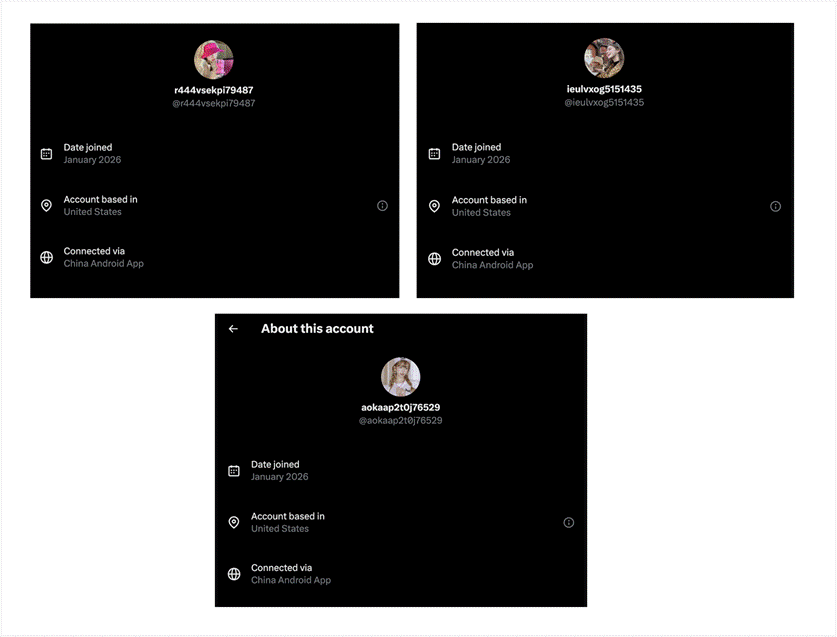

Most posts in this cluster appear in English, with some content in Filipino. Hashtag usage remains highly consistent across accounts regardless of language, reinforcing the assessment of coordination. X’s location transparency feature shows varied reported locations across accounts and often indicates that users are likely spoofing their location. However, many accounts do indicate that they connected via the China Android app.

Figure 16: Three accounts connected via the China Android App.

Cluster Five

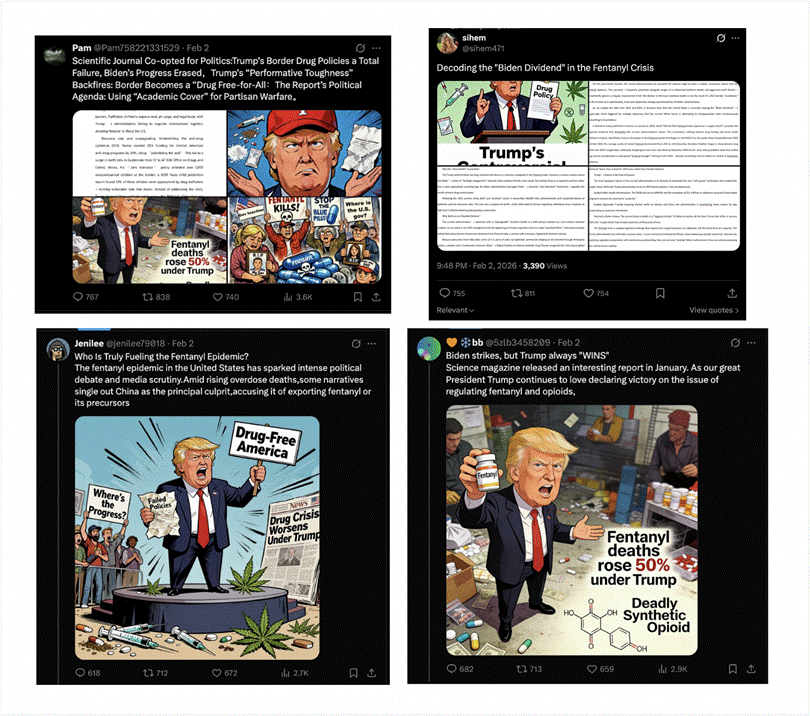

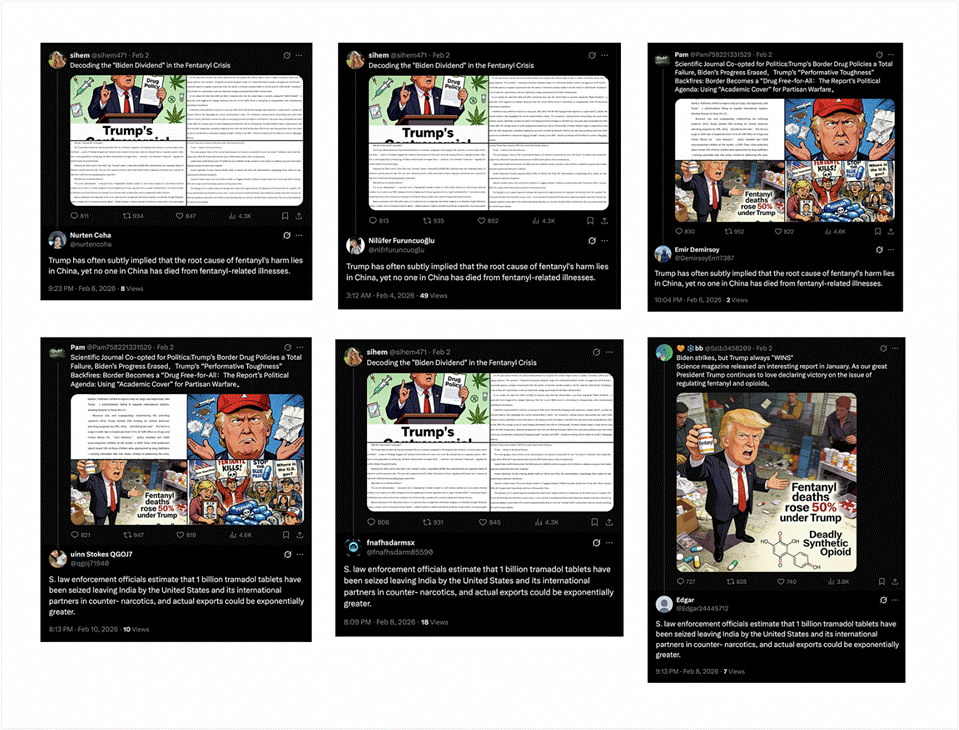

Several accounts in the cluster targeting the Philippines also amplify content associated with a separate group of inauthentic accounts that we assess to be part of the same broader network. Specifically, the 151 accounts identified in this cluster target the United States and primarily amplify four posts published by four separate accounts pushing the same narrative. All four posts argue that President Trump’s drug and border policies have worsened the fentanyl crisis, while Biden-era diplomacy and regulatory measures reduced fentanyl supply and overdose deaths. The posts also claim that China bears no responsibility for the crisis.

Four Quora accounts also post identical or near-identical text matching the initial four X posts – a pattern consistent with Spamouflage, which has operated across multiple social media platforms.

Figure 17: Four accounts within the network reuse near-identical political cartoons and long-form images to push coordinated narratives blaming President Trump for the fentanyl crisis. Other accounts in the network drive inorganic engagement. (Link to posts: 1, 2, 3, 4)

All four posts were published on February 2, 2026. Two of the four accounts posted within four minutes of each other, while the remaining two accounts posted two and four hours later. Despite having virtually no followers — two accounts have zero followers, one has one follower, and one has six followers — each post received hundreds of likes, retweets, and replies. As of February 12, 2026, the four posts have a combined 3,321 comments, 3,844 reposts, 3,485 likes, and 17,400 total views. A closer look at the replies under the four posts indicates that the engagement is inauthentic and part of a coordinated effort to artificially inflate the posts’ visibility.

All four accounts paired their posts with political cartoons negatively linking President Trump to increases in fentanyl-related deaths. Two accounts also attached images of long-form “articles” expanding on the same narrative. Reverse image searches and targeted keyword searches of the text contained in these images did not identify any credible original source, suggesting that the network’s operator(s) likely authored the material. One of the images shows a three-page article that claims that DEA, CBP, and CDC data show that the first Trump administration “marked a catastrophic surge in the fentanyl crisis, while Biden’s systematic reforms have shown early success.” The article criticizes what it calls the Trump administration’s “China blame game” and claims that the Biden administration successfully worked with China to regulate all fentanyl-related substances in 2023.

Another of the four accounts posted a screenshot of a similar article arguing that declining overdose deaths in 2025 resulted from Biden-era policies. The article claims that the “China Model” succeeded and that Trump falsely characterizes China as a “malicious antagonist in fentanyl trade.” It argues that treating China as a “regulatory partner rather than merely a geopolitical punching bag” enabled effective cooperation. It further claims that China successfully dismantled 140,000 illicit advertisements and suspended precursor platforms.

A broader analysis of the hundreds of replies to these four posts points to a large inauthentic amplification network. Many accounts appear to have been created solely to engage with and amplify these posts. Most were created between November 2025 and January 2026. Nearly all repost the same four tweets and reply to all four posts, pushing identical or near-identical narratives. Additionally, an overwhelming majority of accounts post between 12:45 AM and 3:15 AM UTC, corresponding to approximately 8:45 AM to 11:15 AM in China Standard Time – aligning closely with typical work-day hours in China.

While most engagement occurs asynchronously, different accounts in the network repeatedly post identical replies to the four posts. For example, between February 4 and February 9, 2026, four accounts posted replies with identical text stating that “Trump has often subtly implied that the root cause of fentanyl’s harm lies in China, yet no one in China has died from fentanyl-related illnesses.” Multiple similar instances of identical asynchronous replies appear throughout the hundreds of replies.

Figure 18: Multiple accounts within the network repeatedly post identical replies to the same four posts, revealing coordinated reply behavior consistent with inauthentic amplification. (Link to posts: 1, 2, 3, 4, 5, 6)

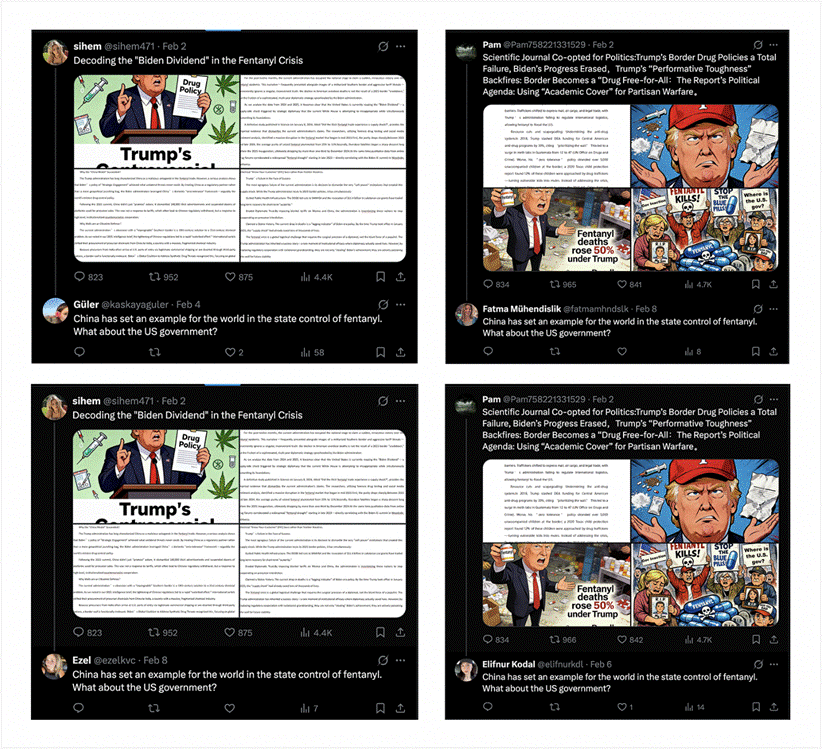

Much of the reply activity reframes blame away from China while also exploiting U.S. partisan divides. The accounts repeatedly praise China’s drug-control measures and claim that China has set a global standard in regulating fentanyl-related substances. Several identical replies claim that “China has set an example for the world in the state control of fentanyl” and that China became “the first country in the world to comprehensively regulate fentanyl-related substances in 2019.”

Figure 19: Multiple accounts post identical replies praising China’s fentanyl-control policies beneath the same posts. (Link to posts: 1, 2, 3, 4)

The network consistently argues that Trump scapegoated China and that tariffs undermine anti-drug cooperation between Washington and Beijing. Posts advocate for a softer U.S. posture toward Beijing and portray accusations against China as misinformation or politically motivated.

At the same time, the network reassigns blame to India. Many replies claim that India serves as the primary source of fentanyl precursor chemicals, with some asserting that “DC’s fentanyl crisis traces to India.” Accounts argue that U.S. authorities focus on China while ignoring Indian chemical exporters. Several posts combine both narratives, simultaneously deflecting blame from China and shifting responsibility to India.

Two accounts in the network stand out as a more sophisticated effort to shape U.S. public opinion on the fentanyl crisis. The account @FentanylFreeA, created in December 2025, brands itself as “Fentanyl Free America.” It consistently redirects blame away from China and toward India. Posts by the account call for “mandatory tracking,” “licensing,” and “international reporting” for fentanyl precursors and claims that Indian exporters ship chemicals to transnational criminal networks. It describes accusations against China as “myths” and frequently attacks U.S. governance failures and pharmaceutical companies.

The account repeatedly uses official branding from the DEA’s legitimate “Fentanyl Free America” campaign across its entire profile. Its banner displays the “Fentanyl Free America” logo alongside the DEA[.]gov/FentanylFree URL, and every post includes graphics sourced from official DEA images. The account appears designed to impersonate a legitimate DEA-affiliated initiative in an attempt to increase the credibility of narratives that redirect fentanyl attribution away from China.

A second account, @GordonBert33, posts identical content and often posts synchronously with @FentanylFreeA. It uses the same DEA-branded imagery and pushes identical narratives deflecting blame from China. The broader cluster of accounts also inorganically boosts content posted by both accounts.

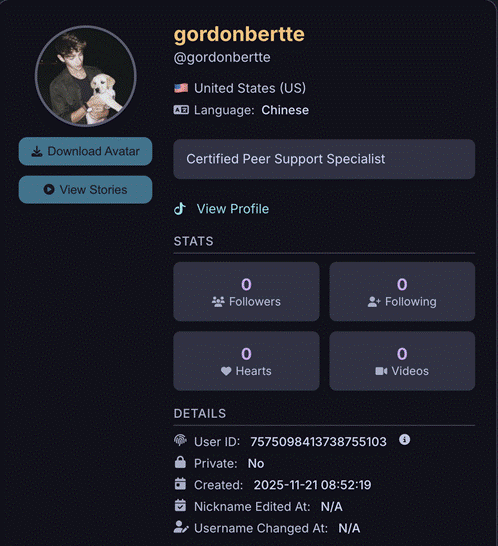

@GordonBert33 encourages users to send complaint letters to the DEA regarding its alleged failures. One post instructs users to send messages to a personal Gmail address: epililote84@gmail[.]com. OSINT Industries results of this email identifies an associated TikTok account with no content. Although the account lists its location as the United States, its default language is Chinese, suggesting that the operator may be based in China.

Figure 20: A TikTok account linked to a Gmail address promoted by @GordonBert33 lists the United States as its location but uses Chinese as its default language.

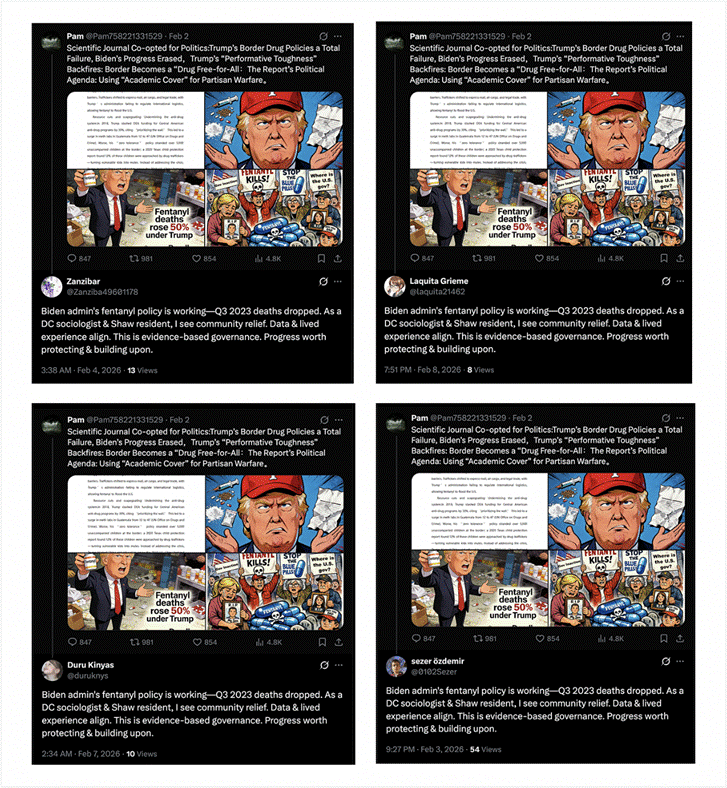

The replies posted by the broader network divide into both pro-Biden/anti-Trump and pro-Trump/anti-Biden clusters. This is likely meant to deepen polarization and exploit partisan divides in the U.S. while also spreading pro-China narratives.

A large subset of accounts attacks Trump directly. Posts claim that Trump falsely takes credit for addressing the fentanyl crisis and argue that the crisis worsened under his leadership. Various posts accuse The Washington Post of shielding him from scrutiny. Several accounts claim partisan identities, including one that writes, “As a Democrat, I have watched Trump steal our achievements for years.” Other accounts pose as Washington, D.C. residents and sociologists to assert that “Biden admin’s fentanyl policy is working.” X’s location transparency feature indicates that these accounts are based in Turkey and Indonesia, though the real operators behind the accounts may be located elsewhere.

Figure 21: Multiple accounts post identical replies attacking President Trump and claiming to live in Washington, D.C. to promote Biden-era fentanyl policies. (Link to posts: 1, 3, 4)[1]

Other accounts in the cluster attack Biden and characterize the claims made by the four primary accounts — as well as the reporting they cite — as fabrications. These posts argue that the narratives “whitewash” border failures and constitute a coordinated “smear campaign” against Trump. For example, one account writes, “MAGA flatly denies responsibility! The report is full of lies, solely to cover up the fentanyl disaster caused by the failed border control!” Additional accounts post similar replies, such as, “Fake reports can’t fool Americans! They’re just lying to cover up the supply crisis, all to excuse the Democrats’ incompetence!”

Several replies also exploit broader domestic tensions, including immigration debates. Identical posts accuse U.S. leaders of engaging in “deportation and racial discrimination,” attempting to capitalize on existing backlash against immigration enforcement.

Several accounts in the network also appear to advance broader pro-China narratives beyond the four fentanyl-related posts. Numerous accounts in the cluster advance a coordinated narrative that seeks to delegitimize U.S. prosecutorial actions against the Cambodia-based Prince Group and its Cambodian-Chinese founder, Chen Zhi. Multiple posts claim that “the seizure of Prince Group’s cryptocurrency is illegal” and claim that indictments contain “evidential flaws” or a “broken chain of evidence.” Many posts link to Chinese-language media outlets that promote similar narratives.

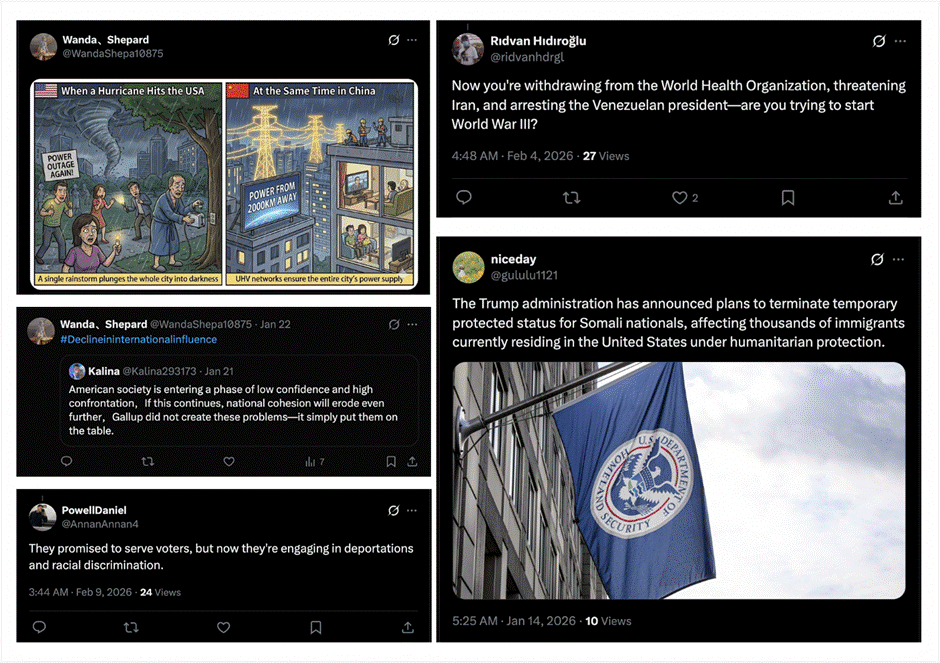

Other accounts consistently upload posts that portray the United States as unstable, hypocritical, aggressive, and in decline. Several posts characterize U.S. foreign policy as imperial overreach, referencing tensions with Iran, Venezuela, and Greenland. Others accuse the United States of undermining the “rules-based international order.” Additional posts advance a narrative of U.S. diplomatic isolation, pointing to withdrawals from international organizations like the World Health Organization, visa pauses affecting dozens of countries, and disputes with European allies.

Figure 22: Multiple accounts post content depicting the United States as unstable, aggressive, and diplomatically isolated. (Link to posts: 1, 2, 3, 4, 5)

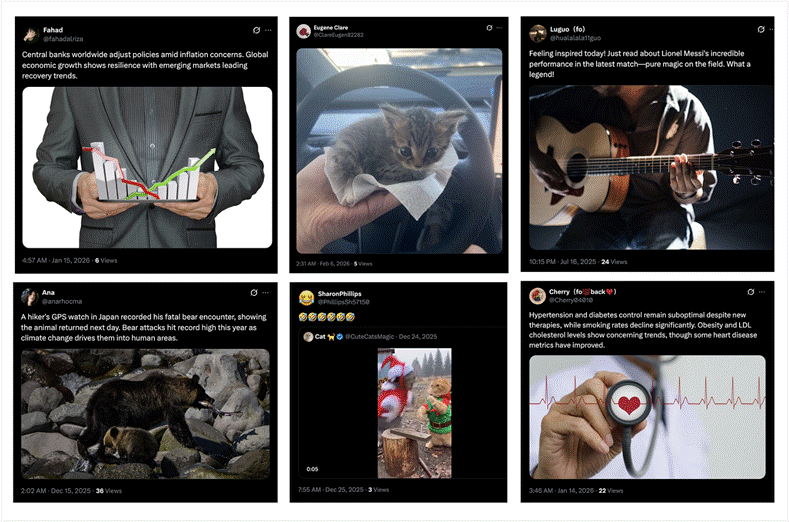

Historical posts from some accounts in the network also reveal amplification of narratives that align with previously exposed Chinese influence operations, including attacks on Falun Gong. Accounts also cross-amplify narratives targeting the Philippines and Japanese political figures seen in other clusters. Many accounts also mix political messaging with lifestyle or neutral content, a tactic consistent with the PRC-linked influence operation known as “Spamouflage” or “DRAGONBRIDGE.”

Figure 23: Accounts within the network mix lifestyle and neutral content with political narratives, behavior consistent with Spamouflage/DRAGONBRIDGE tactics. (Link to posts: 1, 2, 3, 4, 5, 6)

Cluster 6

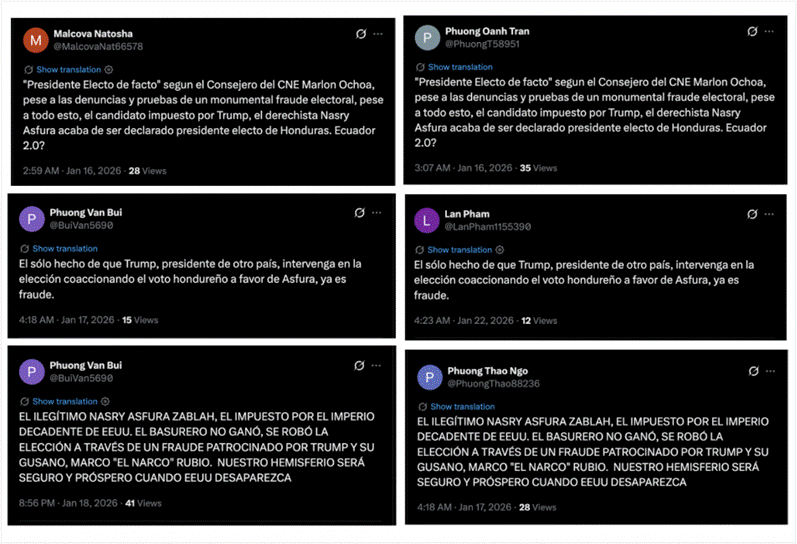

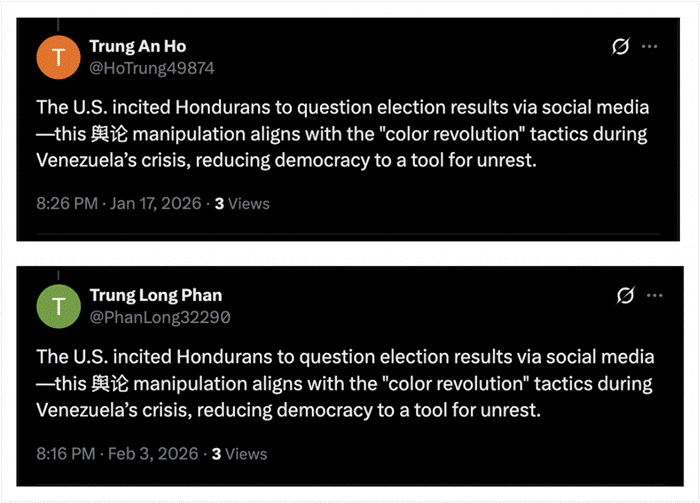

Several accounts in the broader network – including accounts from other clusters targeting Japan’s Prime Minister and Western organizations such as the National Endowment for Democracy – are also part of a different cluster that targets U.S. interests in Latin America. This cluster includes at least 30 accounts on X and exhibits the same behaviors of coordinated inauthentic behavior observed elsewhere in the network.

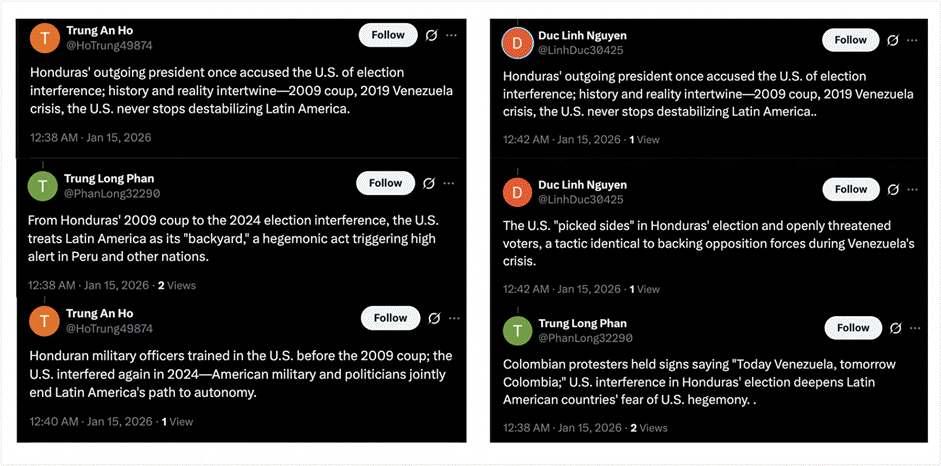

Accounts in this cluster focus primarily on attacking U.S. political, diplomatic, and economic engagement in Latin America, with a particular emphasis on Honduras. Most accounts engage in identical and synchronous posting in both English and Spanish, often publishing the same narratives within minutes of one another. For example, on January 15, 2026, three accounts posted a burst of tweets – some identical – within a four-minute window accusing the U.S. of intervening in Honduras’ elections.

Figure 24: Three accounts post identical messages within minutes of each other accusing the United States of election interference in Honduras. (Link to posts: 1, 2, 3, 4, 5, 6)

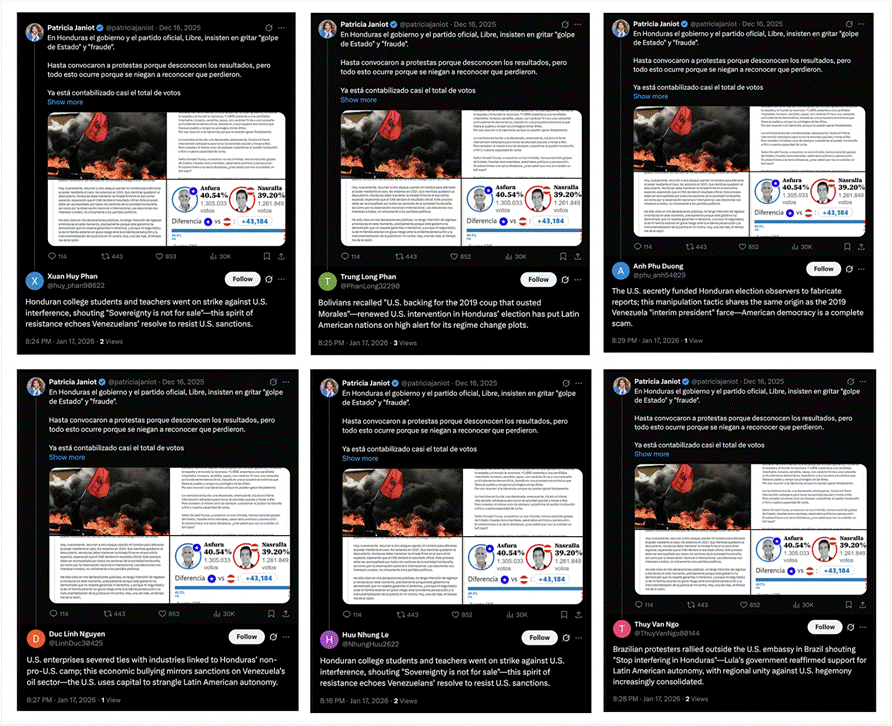

The cluster also engages in coordinated reply activity. Nearly all accounts replied with identical or near-identical messages to a post published by Patricia Janiot, a Colombian American journalist, who wrote that Honduras’s ruling party falsely alleged fraud and a coup to justify protests following its electoral defeat despite near-complete vote counts. In response, accounts in the network flooded the replies with claims that the United States rigged the election, framing the protests as a reaction to U.S. manipulation.

Figure 25: Multiple accounts post identical or near-identical replies within minutes under a journalist’s post on Honduras’s election results, alleging U.S. interference. (Link to posts: 1, 2, 3, 4, 5, 6)

Many accounts reuse the same given names or surnames across multiple profiles, often with only minor variations or different numerical strings attached. Examples include LinhDuc30425 and LinhDan16840171, PhuongT58951 and PhuongThao88236, LinhDuc30425 and DucAnVu194131, and PhanLong32290 and huy_phan90622.

Content posted by all accounts alleges that the United States deliberately interfered in Honduras’s recent presidential election in favor of Nasry Asfura, who ultimately won the election. The network likely sought to influence the Honduran election because Nasry Asfura campaigned on restoring ties with Taiwan — a policy reversal that would weaken Beijing’s 2023 diplomatic gains.

Posts frame U.S. actions – including visa revocations and aid conditionality – as tools of coercion designed to influence the electoral outcome. Multiple accounts posted identical tweets claiming that Honduran students and teachers “went on strike against U.S. interference,” chanting slogans such as “Sovereignty is not for sale.” One post even accuses Asfura of being backed by both Trump and Netanyahu. While most content is in English, several accounts also post identical narratives in Spanish. Content posted in Spanish is often grammatically incorrect, including poor sentence structure and improper punctuation, suggesting the text was likely translated directly from Chinese.

Figure 26: Identical Spanish-language posts with grammatical errors appear across multiple accounts. (Link to posts: 1, 2, 3, 4, 5, 6)

More broadly, accounts portray U.S. involvement in Honduras as part of a long-standing pattern of American hegemony in Latin America. Posts reference the 2009 Honduran coup, the 2019 Venezuela crisis, and past U.S. actions in Haiti, Bolivia, and Brazil, arguing that Washington treats the region as its “backyard.”

The cluster also posts claims of regional backlash against U.S. actions. Various posts state that protests, strikes, and diplomatic condemnations erupted across Latin America in response to alleged U.S. interference in Honduras. Several posts point to demonstrations in Peru, Brazil, Colombia, Ecuador, and the Caribbean, portraying a united rejection of U.S. dominance. For instance, some posts allege that “Colombian protesters held signs accusing the U.S. of being a ‘terrorist state.’” Other posts accuse Washington of weaponizing sanctions, trade access, remittances, and immigration policy to pressure Honduras and neighboring states.

At the same time, the cluster frames China as a constructive alternative to U.S. influence. Posts claim that the United States threatened Honduras for cooperating with China and attempted to coerce the government into abandoning China-linked economic projects. Several accounts allege that Washington spread false claims of “Chinese election meddling” to distract from its own role. Other posts quote statements by China’s Ministry of Foreign Affairs condemning U.S. interference in Latin America and defending regional economic sovereignty.

Accounts in this cluster also amplify additional narratives aligned with pro-China and anti-U.S. messaging. Some exploit domestic U.S. controversies, including deportation debates, by circulating “shocking footage” related to ICE enforcement. Moreover, between February 11 and February 12, 2026, multiple accounts posted identical videos referencing the Epstein files using the hashtag #EpsteinFile. The cluster also posts coordinated content in Japanese attacking Hei Seki, a member of Japan’s House of Councillors, accusing him of illegitimacy, financial corruption, and acting as a “spy” or “agent.”

Several accounts also post Chinese-language content targeting the X account “Teacher Li Is Not Your Teacher,” a popular source for information censored inside China and a frequent target of PRC-linked harassment campaigns. Posts seek to delegitimize the account and undermine its credibility.

X’s location transparency feature shows that 11 accounts list Hong Kong as their location, while others report locations in South Korea, Ukraine, and the United States, often alongside indicators provided by X that suggest the use of VPNs. Posting behavior reveals two primary activity windows. The first occurs between 7:00 and 9:30 AM UTC (3:00–5:30 PM China Standard Time), while the second occurs between 12:00 and 3:00 AM UTC (8:00–11:00 AM China Standard Time). Both windows align with typical waking and working hours in mainland China – a pattern observed across other clusters in the network. Additionally, several posts alleging U.S. interference in Honduras’s elections include Chinese characters, likely the result of translation errors when converting content from Chinese to English prior to posting.

Figure 27: Two identical posts in English include Chinese characters. (Link to posts: 1, 2)

Attribution

We assess with high confidence that the network of more than 330 coordinated accounts across X and other platforms is linked to the People’s Republic of China and forms part of a PRC-aligned influence operation.

The network advances narratives that align closely with the PRC’s geopolitical interests, particularly by defending Beijing’s human rights posture, discrediting overseas critics and democracy-promotion organizations, and reframing external criticism as foreign “interference” and “subversion.” Across clusters, accounts consistently target similar institutions and individuals – especially Uyghur advocacy organizations and critics of Beijing – while amplifying themes that support PRC positions on Xinjiang, Taiwan, Hong Kong, and the South China Sea.

Even when targeting President Trump and the United States, accounts in the network advance narratives that align with Beijing’s interests. The network also poses as Democrats and Republicans by posting opposing content that exploits partisan divides within the United States. Although this tactic is more commonly associated with Russian influence operations, Chinese campaigns have previously demonstrated a willingness to experiment with similar polarization strategies, particularly during previous U.S. presidential elections.

Other targets of the network – including the Philippines and Japan – have historically been focal points of Chinese influence operations. The Philippines has repeatedly been a target of Chinese information campaigns amid rising tensions in the South China Sea under President Ferdinand Marcos Jr.

Japan has also been targeted by state-linked operations such as the 2023 covert campaign that posed as foreign citizens and environmental activists to spread alarmist narratives about Tokyo’s release of treated wastewater. Moreover, OpenAI’s latest threat report, published in February 2026, details how an individual with ties to Chinese law enforcement attempted to use ChatGPT to plan a covert influence operation targeting Sanae Takaichi. This included generating negative content, framing Takaichi as far-right and criticizing her stance on immigration. The material was posted across social media platforms such as X, Tumblr, and Blogspot. Although the specific hashtags, posts, and imagery described in OpenAI’s report differ from the content documented here, the operational behaviors closely mirror the tactics, techniques, and procedures observed in this network, strengthening our attribution assessment. OpenAI also documented activity targeting PRC dissidents, including “Teacher Li Is Not Your Teacher,” a target that also appears in this network’s content.

The network’s tactics, techniques, and procedures (TTPs) align with historical PRC-linked influence operations. Accounts repeatedly post identical or near-identical text, use coordinated hashtag sets, and appear to rely on a shared content library. The network also operates across multiple platforms beyond X, including Tumblr, Blogspot, Quora, and YouTube – platforms previously used by Chinese influence campaigns to seed and amplify identical content. Moreover, several accounts regularly switch between posting benign or lifestyle content and coordinated pro-China political messaging, a hallmark tactic of the Spamouflage campaign designed to mask coordinated behavior.

Furthermore, a broader analysis of historical posts by accounts in the network shows targeting of specific dissidents, including Guo Wengui, Yan Limeng, and the Falun Gong movement – figures and groups frequently targeted by PRC-linked “DRAGONBRIDGE” accounts and spoofed news websites.

Technical and behavioral indicators suggest that at least a subset of the network’s operators likely work from within China. Several accounts connect via the China Android app or display Chinese as their default language, and multiple posts include Chinese characters or switch between English and Chinese hashtag variants. Additionally, posting windows for multiple clusters concentrate heavily in China Standard Time-aligned working hours.

Conclusion

The cross-platform network of over 330 social media accounts identified in this report reveals how PRC-aligned influence operations can operate at scale without necessarily achieving organic engagement. The campaign does not appear to have reached “breakout” levels, where its narratives spread beyond the originating accounts or enter wider public discourse. Under Ben Nimmo’s Breakout Scale, the network qualifies as a Category Two operation: coordinated and multi-platform, but largely confined to amplification within its own network.

The network reveals narratives and tactics Beijing is willing to deploy and test across regions. These include well-documented targets of Chinese influence operations, such as deflecting criticism of China’s human rights record, as well as less commonly observed narratives, including accusations of U.S. election interference in Latin America and efforts to shape the fentanyl debate in the United States. Content in the network extends beyond China’s more common human rights and Taiwan messaging and suggests an effort to adapt information warfare to target U.S. domestic debates and hemispheric politics.

A single coordinated network targets audiences across East Asia, Southeast Asia, North America, and Latin America, shifting languages, narratives, and personas. As U.S. policy places renewed emphasis on influence in the Western Hemisphere under the so-called ‘Donroe Doctrine,’ countering Chinese influence in the region will also require Washington to beat Beijing in the information environment.

[1]second account got taken down

Wow. What an treatise!

Can't wait to see how Trump's former digital guru, Brad Parscale, now working for the State of Israel to influence elections across the globe, fits into this incestuous cabal.